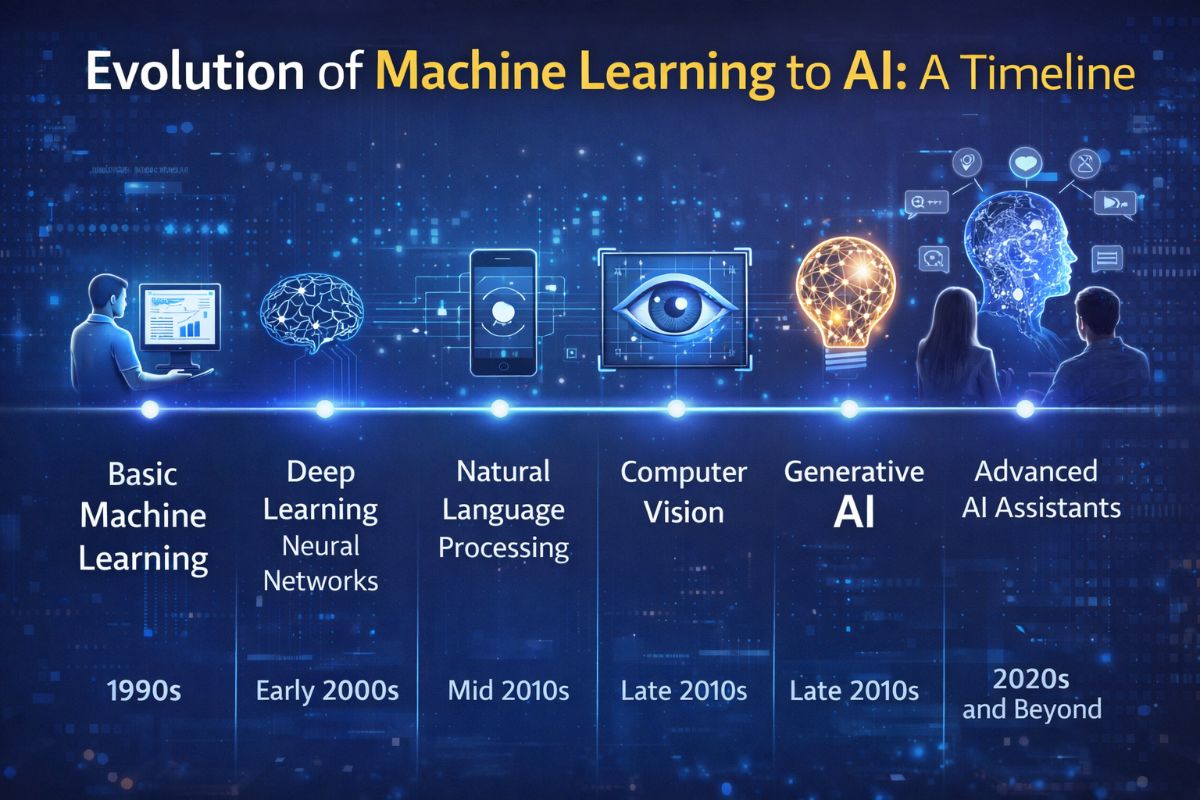

Evolution of Machine Learning to AI: A timeline

Evolution of Machine Learning to AI: A Timeline of the Intelligence Revolution

The journey from simple statistical models to the current era of generative agents is one of the most significant technological transformations in human history. To understand where we are going, we must analyze the structural shifts in how machines learn and process information. Today, the global AI market is projected to reach approximately $1.8 trillion by 2030, driven by a pivot from analytical tools to autonomous generative systems.

The Era of Symbolic AI and Rule-Based Systems (1950s – 1980s)

Early AI was rooted in "symbolic logic." The goal was to program machines with explicit rules—if-then statements that mimicked human decision-making. While groundbreaking, these systems were brittle; they couldn't handle the nuance or unpredictability of real-world data. During this period, the focus was on expert systems designed for specific, narrow tasks like medical diagnosis or chess.

- 1950: Alan Turing publishes "Computing Machinery and Intelligence," proposing the Turing Test.

- 1956: The Dartmouth Workshop establishes AI as a field of research.

- Late 70s: The first "AI Winter" occurs as expectations outpace the computational capabilities of the time.

The Rise of Statistical Machine Learning (1990s – 2010)

As computational power increased, the paradigm shifted from "hand-coding rules" to "learning from data." This era saw the rise of Machine Learning (ML), where algorithms like Random Forests and Support Vector Machines were used to identify patterns in large datasets. This period laid the groundwork for modern enterprise solutions like IBM Watson, which demonstrated the power of processing vast amounts of unstructured text to win Jeopardy! in 2011.

Key developments included the refinement of backpropagation and the creation of specialized frameworks like Caffe, which allowed researchers to build deep learning architectures more efficiently. The focus here was Analytical AI: predicting what will happen based on what has already occurred (e.g., credit scoring or stock market trends).

The Deep Learning Explosion and the Transformer Revolution (2012 – 2021)

In 2012, AlexNet won the ImageNet competition by a massive margin, proving that deep neural networks could outperform traditional ML in vision tasks. This sparked a gold rush in neural network research. Organizations like Google DeepMind pushed the boundaries further with AlphaGo, defeating a world champion in the complex game of Go in 2016.

The true turning point, however, came in 2017 with the Google research paper "Attention is All You Need," introducing the Transformer architecture. Unlike previous models that processed data sequentially, Transformers could process entire sequences of data at once, allowing for massive scaling. This led to the democratization of AI through platforms like Hugging Face, which provided the community with open-access pre-trained models.

The Generative Era: From Chatbots to Agents (2022 – Present)

We are currently living in the Generative AI era. The release of ChatGPT by OpenAI in late 2022 brought Large Language Models (LLMs) to the masses. These models don't just analyze; they create. We have moved from simple text completion to multimodal capabilities found in Claude and Google Gemini, which can see, hear, and reason across different types of data.

The Current Shift: Toward Agentic AI

We are now moving beyond "chat" into the realm of Autonomous Agents. Tools are no longer just responding to prompts; they are executing complex workflows. Examples include:

- Software Engineering: Devin by Cognition acts as an autonomous software engineer, capable of planning and executing entire coding projects.

- Data Interaction: Systems like AskYourDatabase allow users to query SQL databases using natural language, turning complex data science into a simple conversation.

- Productivity: Platforms like Taskade integrate AI agents into project management to automate task generation and workflow orchestration.

Why the Transition from ML to AI Matters

The transition from Machine Learning to modern Artificial Intelligence represents a move from correlation to context. Traditional ML identifies that "X usually follows Y." Modern AI understands the semantic meaning of X and Y, allowing it to apply that knowledge to entirely new scenarios. This "zero-shot" learning capability is what allows a model to write a poem, debug code, and plan a travel itinerary without being specifically trained for those tasks.

The Future: Reasoning and Deep Research

Looking forward, the industry is pivoting toward Reasoning Models. As seen with recent releases like OpenAI's o1 series, the focus is on "Chain of Thought" processing, where the AI takes time to think before it speaks. This reduces hallucinations and increases the reliability of AI in high-stakes environments like law, medicine, and scientific research. The evolution is far from over; we are witnessing the birth of a general-purpose digital intellect that will redefine every aspect of human productivity.