Decoding the Biological Blueprint: The Inspiration Behind Deep Learning

Decoding the Biological Blueprint: The Inspiration Behind Deep Learning

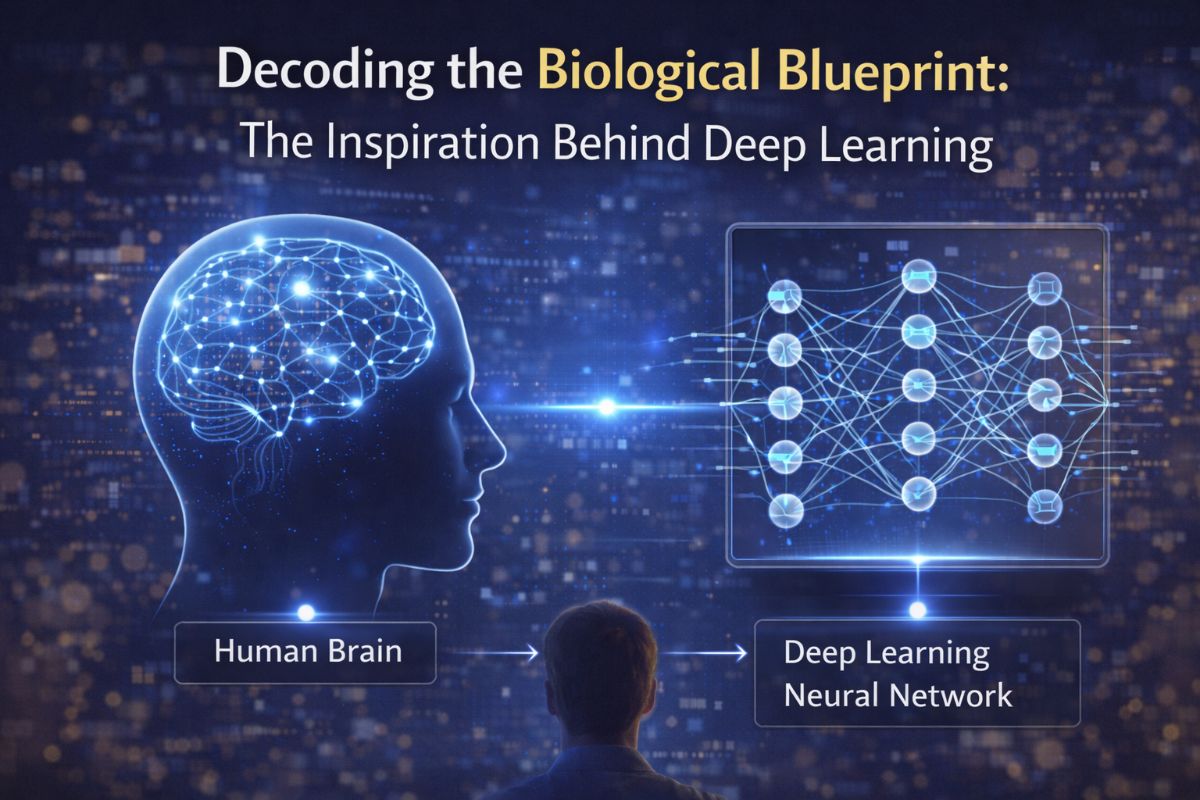

In the rapidly evolving landscape of artificial intelligence, one question remains central to both enthusiasts and skeptics: What is the main inspiration behind deep learning algorithms? The answer is as profound as it is complex. At its core, deep learning is a computational attempt to mimic the structural and functional architecture of the human brain.

While traditional programming relies on explicit instructions, deep learning algorithms—a subset of machine learning—are inspired by biological neural networks. This biological mimicry has catalyzed a paradigm shift, moving the industry from simple analytical AI to the sophisticated generative capabilities seen in models from OpenAI and Google DeepMind.

The Neuron: From Biology to Bit

The fundamental unit of the human brain is the neuron. Our brains contain roughly 86 billion neurons, interconnected by synapses that pass electrical and chemical signals. In the late 1940s and 50s, pioneers like Warren McCulloch and Walter Pitts began conceptualizing the "artificial neuron," which forms the basis of what we now call Artificial Neural Networks (ANNs).

- Dendrites (Inputs): In deep learning, these are the data features fed into the system.

- Synapses (Weights): These represent the strength of the connection between nodes, which the algorithm adjusts during the training process.

- Axon (Output): The final processed signal that moves to the next layer of the network.

This hierarchical structure allows tools like IBM Watson to process massive datasets by breaking down complex information into simpler, manageable abstractions—much like how our visual cortex processes light into edges, then shapes, and finally recognizable faces.

The Power of Layers: Hierarchical Representation

The "Deep" in Deep Learning refers to the number of layers through which data is transformed. This was inspired by the Visual Cortex, where different regions are responsible for different levels of abstraction. For example, in computer vision tasks utilized by NVIDIA AI, the initial layers might detect simple lines, while deeper layers identify complex textures and 3D structures.

This biological inspiration has led to breakthroughs in 3D modeling and generation. Tools like Meshy AI and GET3D leverage these deep hierarchical layers to translate 2D prompts into complex 3D assets, essentially "imagining" depth and form in a way that parallels human spatial reasoning.

From Analytical AI to Generative Excellence

Initially, deep learning was primarily analytical—designed to classify and predict. However, the industry has transitioned toward Generative AI, inspired by the brain's ability not just to recognize patterns, but to synthesize new information. The "Attention mechanism," introduced in the seminal paper "Attention Is All You Need," mimics the human cognitive ability to focus on specific parts of an input while ignoring others.

Today, this inspiration powers global leaders in the generative space:

- Google Gemini: A multimodal powerhouse capable of reasoning across text, code, and video.

- Claude (by Anthropic): Focused on "Constitutional AI," aiming to align AI responses with human values, much like social conditioning in humans.

- Tencent Hunyuan: A massive-scale model demonstrating how deep learning can handle the nuances of diverse linguistic structures.

Real-World Data: The Scale of the Inspiration

The hardware required to simulate this biological complexity has seen exponential growth. According to recent industry reports, the AI infrastructure market, dominated by NVIDIA AI, is projected to exceed $200 billion by 2030. This investment is driven by the need for massive compute power to train models like ChatGPT, which contains billions of parameters—the digital equivalent of synaptic connections.

Furthermore, the open-source community, centered around platforms like Hugging Face, allows researchers to share "pre-trained" models. This is analogous to "transfer learning" in humans, where knowledge from one domain (like learning to ride a bike) can be applied to another (like learning to ride a motorcycle).

Future Directions: Neuromorphic Computing

As we look forward, the inspiration continues to deepen. Engineers are now exploring Neuromorphic Computing—hardware designed to physically resemble the brain's architecture to achieve higher energy efficiency. While current GPUs are powerful, they consume vast amounts of energy compared to the human brain, which operates on roughly 20 watts.

We are also seeing the rise of specialized research agents. Tools like OpenAI Deep Research represent the next step: moving from static responses to active, iterative reasoning, bringing us closer than ever to the dream of Artificial General Intelligence (AGI).

Conclusion

The main inspiration behind deep learning remains the most complex object in the known universe: the human brain. By mimicking its layered structure and synaptic plasticity, we have unlocked technologies that were once the stuff of science fiction. Whether you are generating 3D art with Luma Genie or analyzing complex databases with AskYourDatabase, you are interacting with a digital reflection of our own cognitive architecture.